In the fast-paced environment of software delivery, the integrity of a User Story often determines the success of the sprint. A well-crafted story card acts as a contract between the business, the development team, and quality assurance. It is not merely a task description; it is a blueprint for value delivery. When quality checks are applied rigorously to every story card, teams reduce rework, improve predictability, and ensure that the final product aligns with user needs. This guide outlines the essential validation steps required to maintain high standards throughout the development lifecycle.

Many organizations struggle with inconsistent story quality, leading to confusion during implementation and unexpected defects in production. By implementing a structured approach to reviewing story cards, teams can catch ambiguities early, prevent scope creep, and foster a culture of accountability. The following sections detail the specific checks, criteria, and processes necessary to elevate the reliability of your backlog.

1. Understanding the Anatomy of a Quality Story 🧩

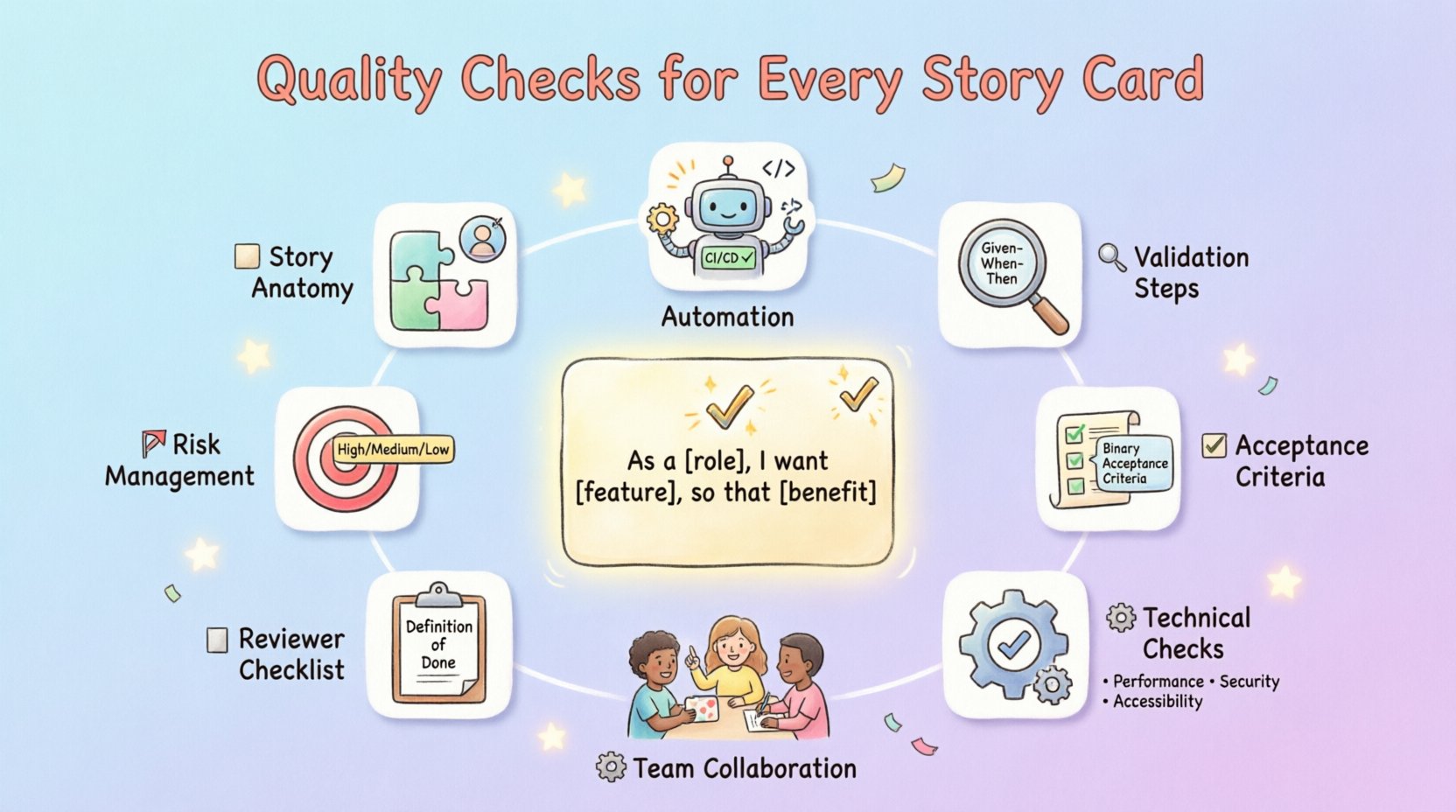

Before diving into specific checks, it is vital to understand what constitutes a robust User Story. A quality story is not just a sentence; it is a structured element that contains specific information. The standard format follows the “As a [role], I want [feature], so that [benefit]” structure. However, the format alone does not guarantee quality. Each component must be scrutinized.

- User Role Clarity: Who is the primary beneficiary? Is the persona specific enough to guide design decisions?

- Actionable Feature: Does the feature describe a specific behavior rather than a vague outcome?

- Clear Value Proposition: Is the “so that” clause explicit about the business or user value?

Without these elements, developers may make assumptions that diverge from stakeholder expectations. For instance, a story stating “Improve login speed” lacks context. Is it for mobile? Is it for a specific user segment? Quality checks ensure these details are filled in before work begins.

2. Pre-Development Validation Steps 🧐

Validation begins long before the first line of code is written. This phase, often occurring during refinement or grooming sessions, focuses on clarity and feasibility. Teams should perform a “Sanity Check” on the backlog items to ensure they are ready for estimation.

2.1 Ambiguity Reduction

Words like “fast,” “modern,” or “easy” are subjective. Quality checks require replacing these with measurable terms. Instead of “fast,” use “loads within 2 seconds.” Instead of “modern,” specify the design system version. This eliminates interpretation gaps between team members.

2.2 Dependency Mapping

Every story exists within a larger ecosystem. A quality check must identify:

- Internal Dependencies: Are there other stories in the current sprint that must be completed first?

- External Dependencies: Does the story rely on third-party APIs, databases, or services outside the team’s control?

- Data Requirements: What data is needed to test this functionality? Is test data available?

2.3 Estimation Readiness

If a team cannot estimate a story, it is likely not well-defined. The quality check involves verifying that the scope is understood enough to assign a size (e.g., story points). If the effort is unknown, the story should be split or researched further before entering the active development queue.

3. Crafting Unambiguous Acceptance Criteria ✅

Acceptance Criteria (AC) are the conditions that a software product must satisfy to be accepted by a user, customer, or other stakeholder. They are the definition of “Done” for a specific story. Poorly written AC leads to testing gaps and failed deployments.

3.1 The Rule of Specificity

Every acceptance criterion should be binary. It must be pass or fail. There should be no room for “maybe.” Use the following structure for each criterion:

- Given: The initial context or state of the system.

- When: The action or event that triggers the behavior.

- Then: The expected outcome or result.

3.2 Covering Edge Cases

Most stories focus on the happy path. Quality checks require the team to explicitly address edge cases. This includes:

- What happens if the input field is empty?

- What happens if the network connection drops?

- What happens if the user tries to perform an action they do not have permission for?

- What are the limits of data entry (e.g., character count)?

3.3 Testability

A criterion is useless if it cannot be tested. Ensure every AC has a corresponding test case. If a criterion relies on visual aesthetics without defined standards, it should be updated to reference a specific design asset or color code.

4. Definition of Done vs. Acceptance Criteria 🔄

Confusion often arises between Acceptance Criteria and the Definition of Done (DoD). While AC applies to individual stories, DoD applies to the entire team’s workflow. A quality check must verify that both are aligned.

| Aspect | Acceptance Criteria (AC) | Definition of Done (DoD) |

|---|---|---|

| Scope | Specific to one User Story | Applies to all work items |

| Content | Functional requirements and behaviors | Quality standards (testing, code review, documentation) |

| Ownership | Defined by Product Owner | Defined by the Development Team |

| Example | “User can reset password via email” | “Code reviewed, unit tests passed, deployed to staging” |

When reviewing a story, ensure that the AC does not overlap with the DoD, nor does it contradict it. The AC should be specific to the feature, while the DoD ensures the code meets general quality standards.

5. Technical and Non-Functional Checks ⚙️

Functional requirements are only half the battle. A story can work perfectly but fail due to performance, security, or accessibility issues. These checks are often overlooked until late in the cycle.

5.1 Performance Standards

Does the story introduce new processing loads? If so, quality checks must define performance metrics. For example, a new search function should not degrade the performance of the homepage by more than 10%. These metrics must be documented in the story card.

5.2 Security Compliance

Every story must be checked against security baselines. This includes:

- Authentication: Does the feature require login? If so, how is it managed?

- Data Protection: Is sensitive data encrypted in transit and at rest?

- Input Validation: Are all user inputs sanitized to prevent injection attacks?

- Permissions: Are role-based access controls (RBAC) enforced correctly?

5.3 Accessibility (A11y)

Software must be usable by everyone. Quality checks should verify compliance with WCAG (Web Content Accessibility Guidelines). Key checks include:

- Are all images alt-texted?

- Do color contrasts meet minimum ratios?

- Can the interface be navigated using only a keyboard?

- Are form labels associated with their inputs?

5.4 Compatibility

Does the story need to work across multiple browsers or devices? The story card should specify the matrix of supported environments. Testing on unsupported devices should be noted as a known limitation.

6. The Reviewer’s Checklist 📝

To streamline the validation process, teams can adopt a standardized checklist. This ensures consistency regardless of who reviews the story. The following table outlines the critical checkpoints for every story card.

| Category | Check Question | Pass/Fail |

|---|---|---|

| Clarity | Is the user persona clearly defined? | ☐ |

| Clarity | Is the business value explicitly stated? | ☐ |

| Scope | Is the story small enough to fit in a sprint? | ☐ |

| Scope | Are all dependencies identified? | ☐ |

| Criteria | Are acceptance criteria binary (Pass/Fail)? | ☐ |

| Criteria | Are negative test cases included? | ☐ |

| Technical | Are performance requirements listed? | ☐ |

| Technical | Are security requirements addressed? | ☐ |

| Technical | Is accessibility considered? | ☐ |

| Design | Are wireframes or mockups linked? | ☐ |

| Testing | Is test data available or created? | ☐ |

Using this checklist during refinement meetings ensures that no critical aspect is overlooked. It shifts the burden of quality from the end of the cycle to the beginning.

7. Managing Dependencies and Risks 🎯

Stories rarely exist in a vacuum. They interact with other parts of the system. Identifying risks early prevents bottlenecks. A quality check must assess the risk profile of the story.

7.1 Risk Assessment

High-risk stories require more scrutiny. Risks include:

- Technical Complexity: Is the technology new to the team?

- Business Impact: What is the impact if this feature fails?

- Regulatory Compliance: Does this feature touch on legal requirements (e.g., GDPR, HIPAA)?

7.2 Mitigation Strategies

For every identified risk, there should be a mitigation plan documented. For example, if a third-party API is unstable, the story should include a fallback mechanism or a mock service implementation. This ensures the story can be completed even if external factors change.

8. Common Defects in Story Cards ⚠️

Even experienced teams make mistakes. Recognizing common patterns of poor story quality helps in prevention. Below are frequent defects and how to correct them.

| Defect Type | Description | Correction Strategy |

|---|---|---|

| Vagueness | Using words like “user-friendly” or “optimized”. | Replace with metrics and specific behaviors. |

| Implicit Assumptions | Assuming knowledge that isn’t documented. | Document all assumptions explicitly. |

| Scope Creep | Combining multiple features into one story. | Split the story into smaller, independent units. |

| Missing AC | No acceptance criteria provided. | Require AC as a blocker for moving to In Progress. |

| Test Gaps | No mention of testing requirements. | Add a dedicated testing section in the card. |

9. Maintaining Velocity Through Quality 🏎️

There is a misconception that slowing down to check quality reduces velocity. In reality, skipping quality checks slows down delivery significantly due to rework. Fixing a defect found in production is exponentially more expensive than fixing it during the story card phase.

By enforcing these checks, teams achieve:

- Higher First-Time Right Rate: Less time spent fixing bugs later.

- Reduced Context Switching: Developers spend less time asking clarifying questions.

- Predictable Sprints: Work that is started is more likely to be finished.

- Improved Morale: Teams feel less stressed when requirements are clear.

10. Collaboration and Continuous Improvement 🤝

Quality is a shared responsibility. It is not solely the job of the Product Owner or the QA engineer. It requires collaboration across the entire squad. Regular retrospectives should include a discussion on story card quality. What went wrong? Which stories were unclear? How can the checklist be improved?

Feedback loops are essential. If developers find that certain types of stories are consistently blocked by missing information, the intake process should be adjusted. This might involve changing the template or adding mandatory fields to the story creation form.

11. The Impact of Technical Debt on Stories 🏗️

Quality checks must also account for technical debt. Sometimes a story cannot be implemented cleanly due to existing code structure. The story card should acknowledge this.

- Refactoring Stories: Are there stories dedicated to improving code quality without adding features?

- Debt Payment: Is the story explicitly paying down debt, or is it introducing new debt?

- Documentation: Is the technical impact documented for future maintainers?

Ignoring technical debt in story cards leads to a fragile system. Over time, the cost of change increases, and velocity drops. Balancing feature delivery with maintenance is a key part of long-term quality assurance.

12. Automating Quality Checks Where Possible 🤖

While human review is irreplaceable, automation can handle repetitive checks. CI/CD pipelines can enforce:

- Linting: Code style consistency.

- Unit Test Coverage: Ensuring new code meets coverage thresholds.

- Security Scanning: Automated vulnerability detection.

- Accessibility Scanning: Automated checks for contrast and ARIA labels.

These automated gates act as a safety net, ensuring that stories meeting the technical Definition of Done are the only ones merged. This supports the manual checks by catching errors before human review.

13. Finalizing the Story Card for Handoff 📤

The final step before a story moves to “In Progress” is the handoff. This is a formal agreement that the story is ready. The checklist confirms that:

- All AC are defined.

- Designs are attached.

- Dependencies are resolved.

- Test data is prepared.

- Stakeholders have reviewed and approved.

This formalization reduces the “handoff friction” where developers wait for information. It creates a smooth flow from planning to production.

14. Adapting Checks for Different Contexts 🌍

Not all projects are the same. A startup might prioritize speed over documentation, while a bank prioritizes compliance over speed. Quality checks should be adaptable.

- Regulated Industries: Add compliance checklists to every story.

- Mobile Apps: Add device and OS version checks.

- API Development: Add schema and contract validation checks.

The core principles remain the same, but the specific details must align with the project context. Flexibility in the quality framework ensures it remains useful rather than bureaucratic.

15. Summary of Key Takeaways 📌

Implementing quality checks for every story card is a foundational practice for high-performing teams. It transforms the story from a vague task into a precise contract. By focusing on clarity, testability, and completeness, teams can reduce waste and deliver value consistently.

Key actions include:

- Enforcing the “As a, I want, So that” format.

- Writing binary Acceptance Criteria.

- Identifying dependencies and risks early.

- Validating Non-Functional Requirements.

- Using a standardized checklist for every item.

- Integrating automated quality gates.

When these practices become routine, the development process becomes smoother, and the product quality improves organically. The investment in story card quality pays dividends in reduced defects and higher team confidence.