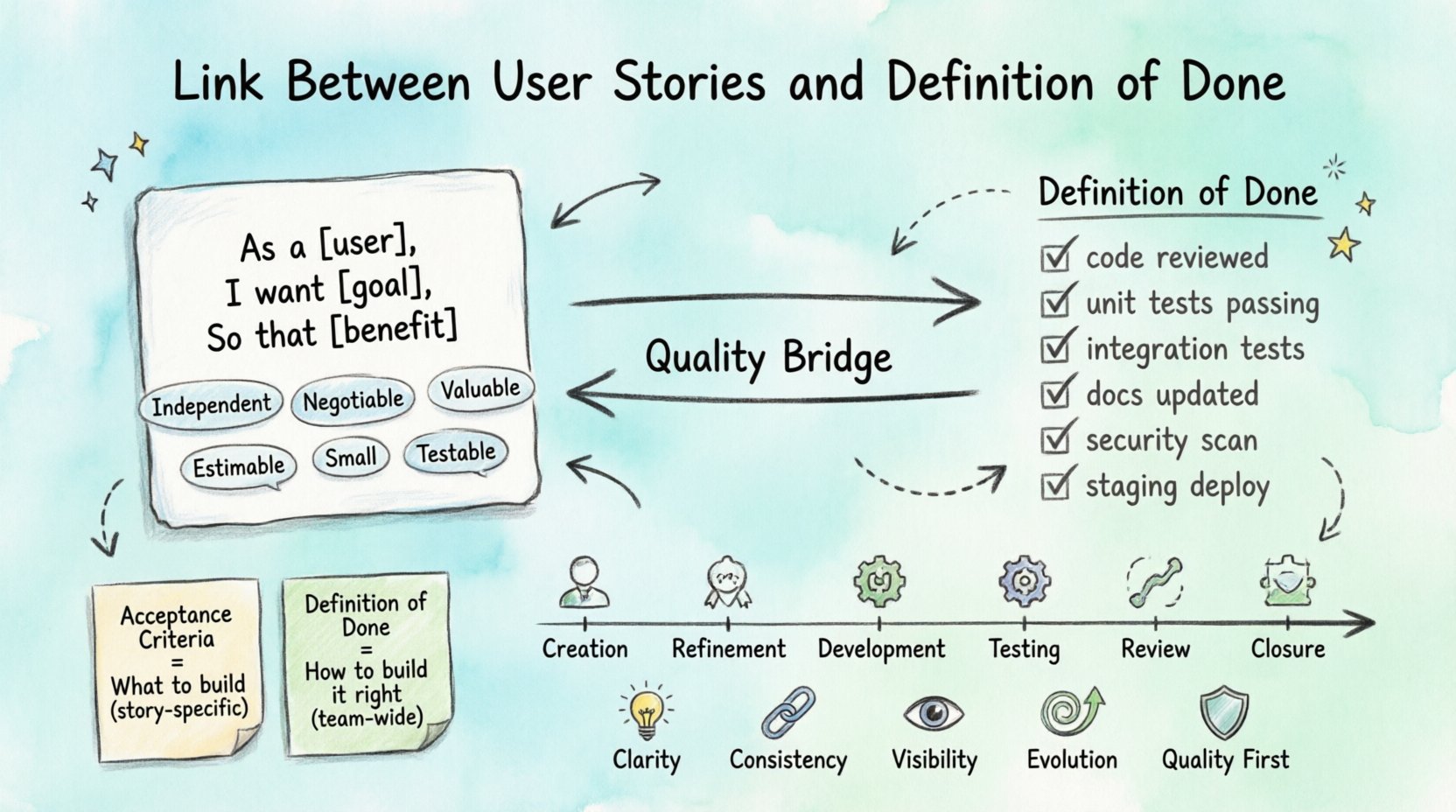

In the landscape of modern software development, the connection between a user story and the definition of done is not merely procedural; it is foundational. A user story defines what needs to be built from the perspective of the end user, while the definition of done establishes the quality standards required before that work is considered complete. Understanding this relationship ensures that value is delivered consistently without compromising quality or introducing hidden technical debt.

Many teams struggle when these two concepts operate in isolation. A story might be marked as complete based on functionality alone, ignoring the non-functional requirements that keep the system stable. Conversely, a rigid definition of done might slow down delivery if not applied with context. This article explores the mechanics of this link, how to align them effectively, and why this alignment matters for long-term success.

🧩 Understanding the User Story 🎯

A user story is a brief, simple description of a feature told from the perspective of the person who desires the new capability. It follows a standard template:

- As a [type of user],

- I want [some goal],

- So that [some reason/benefit].

This format shifts focus from technical implementation to user value. However, the story itself is a placeholder for a conversation. It is an invitation to discuss requirements, constraints, and expectations. Without a clear endpoint, a story can remain in a state of perpetual development.

Key Components of a Strong Story

To ensure a story is actionable, it must meet specific criteria. These components guide the team during planning and execution:

- Invest: Independent, Negotiable, Valuable, Estimable, Small, Testable.

- Acceptance Criteria: Specific conditions that must be met for the story to be accepted by the product owner.

- Context: Background information that helps developers understand the business logic.

When a story is well-defined, it reduces ambiguity. However, ambiguity is often where quality issues arise. This is where the definition of done steps in to provide a safety net.

🏁 Defining the Definition of Done ✅

The definition of done is a formal description of the state of the increment when it meets the quality measures required for the product. It is a checklist of activities that must be completed for a user story to be considered finished. Unlike acceptance criteria, which are specific to a single story, the definition of done applies to all stories within a team or product.

Why DoD Matters

Without a clear definition of done, teams risk accumulating technical debt. Features may work in the short term but become difficult to maintain, test, or deploy over time. A robust definition of done ensures that every increment is potentially shippable.

- Transparency: Everyone knows what “done” looks like.

- Quality Assurance: Non-functional requirements are met consistently.

- Velocity Stability: Predictable delivery rates because rework is minimized.

Common Elements of a Definition of Done

While specific items vary by team, most definitions include a mix of technical and process-based standards:

- Code reviewed by peers

- Unit tests written and passing

- Integration tests executed successfully

- Documentation updated

- Performance benchmarks met

- Security scan passed

- Deployed to a staging environment

🔄 How They Connect in the Workflow 🔗

The link between user stories and the definition of done is active throughout the development lifecycle. It is not a final checkpoint but a continuous filter. Every time a story moves from “In Progress” to “Done,” it must satisfy both its specific acceptance criteria and the team’s general definition of done.

The Flow of Value

Consider the lifecycle of a story:

- Creation: The story is added to the backlog with initial acceptance criteria.

- Refinement: The team discusses the story and ensures the DoD is understood.

- Development: Code is written, adhering to coding standards defined in the DoD.

- Testing: QA verifies acceptance criteria against the DoD checklist.

- Review: Stakeholders review the increment against the story goal.

- Closure: The story is moved to Done only when all criteria and DoD items are met.

If a story meets its acceptance criteria but fails a DoD item (e.g., documentation is missing), it cannot be marked as complete. This prevents the accumulation of incomplete work.

📊 Acceptance Criteria vs. Definition of Done 🆚

Confusion often arises between acceptance criteria and the definition of done. While they are related, they serve different purposes. Understanding the distinction is critical for managing the link between stories and completion standards.

| Feature | Acceptance Criteria | Definition of Done |

|---|---|---|

| Scope | Specific to a single user story | Applies to all user stories |

| Purpose | Defines what the feature does | Defines the quality of the feature |

| Stability | Changes frequently with requirements | Remains stable over time |

| Example | “User can reset password via email” | “Code is reviewed and unit tested” |

Acceptance criteria answer the question, “Did we build the right thing?” The definition of done answers, “Did we build the thing right?” Both must be satisfied for a story to be truly complete.

⚠️ Common Pitfalls When Separating Them ❌

When teams treat these concepts as separate entities, several issues emerge. Recognizing these pitfalls helps maintain the integrity of the development process.

1. The “Almost Done” Trap

Teams often mark a story as done because the feature works, but other requirements are pending. For example, the code works, but it hasn’t been scanned for security vulnerabilities. This leads to a false sense of progress. The story is technically functional but not production-ready.

2. DoD Creep

Over time, teams add items to the definition of done without removing old ones. This slows down delivery. If the DoD becomes too rigid, it can stifle innovation or make it difficult to deliver value quickly. The DoD should be reviewed periodically to ensure it remains relevant.

3. Ignoring Non-Functional Requirements

Acceptance criteria usually focus on functional behavior. If the definition of done does not explicitly include non-functional requirements (like performance, accessibility, or scalability), these are often overlooked. This results in a system that works but is slow or inaccessible.

4. Lack of Team Consensus

If the product owner, developers, and testers do not agree on what the definition of done entails, the link breaks. One person may think “testing complete” means unit tests, while another expects full regression testing. This misalignment causes friction during sprint reviews.

🛠️ Implementing the Connection Effectively 🛠️

To strengthen the link between user stories and the definition of done, teams should adopt specific practices. These steps help embed quality into the process rather than treating it as an afterthought.

1. Visualize the Standards

Make the definition of done visible on the team board. When a story card is moved to the Done column, it should be clear that every item on the DoD checklist has been addressed. This visual cue reinforces accountability.

2. Integrate DoD into Story Cards

Include a reference to the current definition of done directly on the story card or ticket. This serves as a constant reminder of the standards required. It prevents the team from forgetting specific requirements as the sprint progresses.

3. Conduct DoD Audits

Regularly audit completed stories to ensure the definition of done was actually followed. If a story was marked done but missed a DoD item, discuss why. Was the standard unclear? Was the time pressure too high? Use this data to improve the process.

4. Empower the Team

The team owns the definition of done. They should be the ones to update it as tools and technologies change. If a new testing framework is adopted, the DoD should reflect that change. This ownership ensures the standards remain practical and effective.

5. Prioritize Quality Over Speed

When deadlines loom, there is a temptation to skip DoD items to meet the sprint goal. Resist this. A story that is not done is not a completed story. Delivering a half-finished feature is worse than delivering nothing. It creates debt that must be repaid later with interest.

📈 Measuring the Impact on Delivery 📈

How do you know if the link between user stories and the definition of done is working? Metrics provide insight into the health of the process. Tracking these indicators helps identify areas for improvement.

- Velocity Stability: Consistent velocity suggests that the DoD is realistic. If velocity fluctuates wildly, the DoD may be too strict or too loose.

- Defect Escape Rate: The number of bugs found after release. A strong DoD should minimize post-release defects.

- Rework Percentage: The amount of work returned for correction. Lower rework indicates better alignment with DoD.

- Lead Time: The time from start to finish. If lead time increases without value, the DoD may need optimization.

Understanding Technical Debt

One of the primary benefits of a strict definition of done is the management of technical debt. Every time a story is completed without meeting the DoD, debt is incurred. Over time, this debt slows down development significantly.

By maintaining the link, teams ensure that every story contributes to a stable codebase. This stability allows for faster development in the long run. It is an investment in future velocity.

🌱 Evolving the Definition of Done

The definition of done is not static. It evolves as the team matures, as tools change, and as the product grows. A DoD that works for a startup may not work for an enterprise. The key is to keep it living.

When to Update the DoD

Consider updating the definition of done when:

- A new technology is introduced into the stack.

- A new security vulnerability is discovered in the workflow.

- Regulatory requirements change.

- The team consistently finds bottlenecks in a specific DoD item.

- Customer feedback indicates a gap in quality.

Removing Items

Just as you add items, you may need to remove them. If a task becomes automated or obsolete, keep it on the list. Automation can often replace manual checks. For example, if code formatting is now handled by an automated linter, manual checks for formatting can be removed from the DoD to save time.

🤝 Collaboration is Key

The relationship between user stories and the definition of done relies on collaboration. It requires input from developers, testers, product owners, and operations. No single role can define what “done” means for the whole product.

Roles in the Process

- Developers: Ensure code quality, unit tests, and peer reviews are met.

- Testers: Verify acceptance criteria and perform integration testing.

- Product Owners: Confirm that the value delivered matches the user story goal.

- Operations: Validate deployment processes and monitoring setup.

When these roles communicate effectively, the definition of done becomes a shared contract. It ensures that everyone agrees on the quality bar before work begins.

🔮 Future-Proofing the Link

As software development practices evolve, the link between stories and the definition of done must adapt. Automation and continuous integration play a larger role. The definition of done should increasingly include automated checks.

In the future, the definition of done may become even more integrated into the code itself. Tools that automatically block merges if certain criteria are not met will become standard. This shifts the quality gate from a human checklist to a system enforcement.

💡 Summary of Best Practices

To summarize, maintaining a strong link between user stories and the definition of done requires discipline and continuous improvement. Here are the core takeaways:

- Clarity: Ensure every story has clear acceptance criteria.

- Consistency: Apply the definition of done to every story without exception.

- Visibility: Make the standards visible to the entire team.

- Evolution: Review and update the definition of done regularly.

- Quality First: Prioritize long-term stability over short-term speed.

By treating the definition of done as an integral part of the user story rather than an afterthought, teams can deliver high-quality software consistently. This approach builds trust with stakeholders and creates a sustainable development environment.

🚀 Final Thoughts

The connection between user stories and the definition of done is the backbone of reliable delivery. It transforms vague requests into concrete, tested, and valuable increments. When this link is strong, the team operates with clarity and purpose.

It is not about following rules for the sake of rules. It is about respecting the craft of software development. Every line of code, every test, and every deployment matters. By aligning the story goal with the quality standards, teams ensure that they are building something that lasts.

Start by reviewing your current definition of done. Is it clear? Is it followed? Does it support your user stories? If the answer is yes, you are on the right path. If not, use this as an opportunity to refine your process. The goal is always to deliver value that stands the test of time.