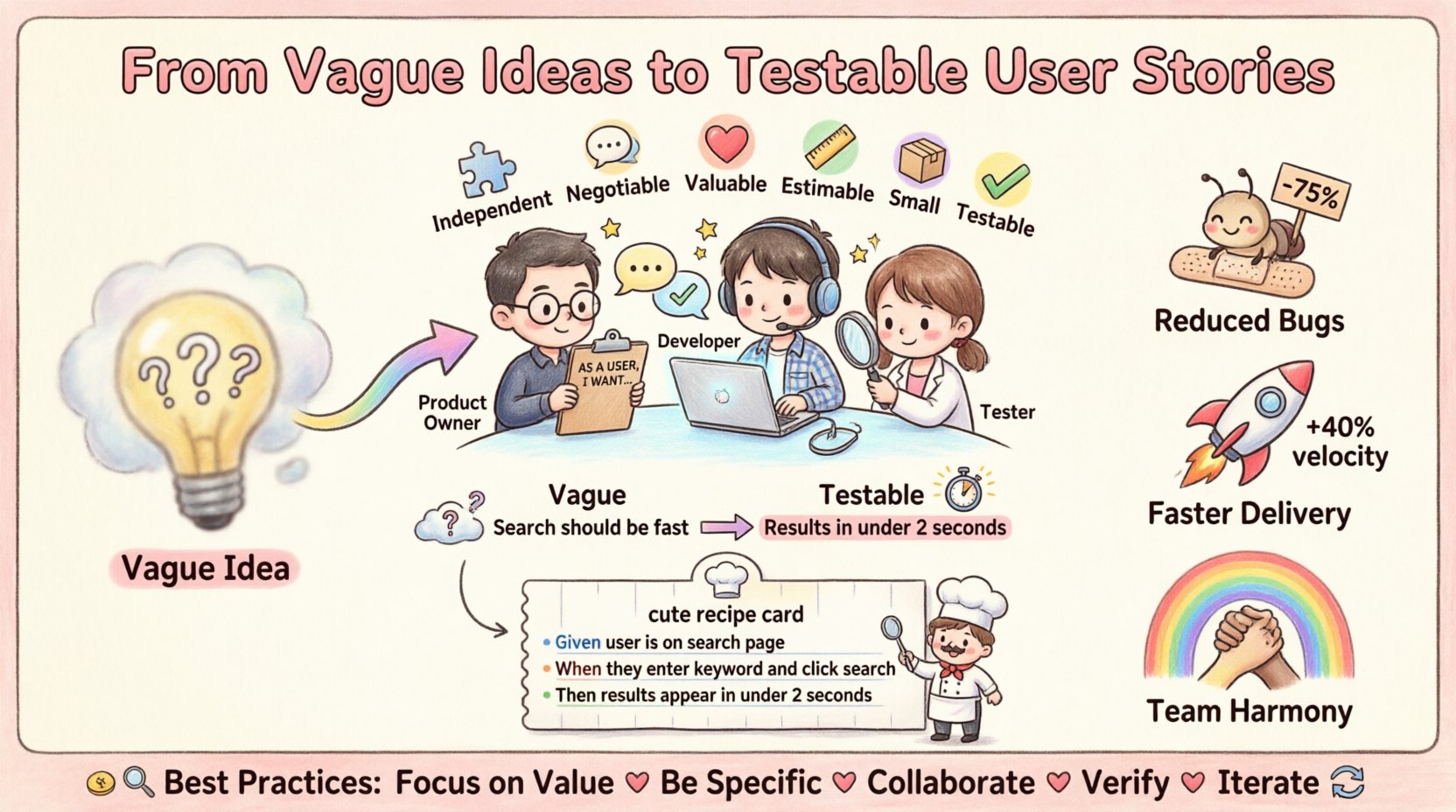

Every product team begins with an idea. It starts as a spark, a conversation over coffee, or a note on a whiteboard. This spark is often called a vague idea. It holds potential, but it lacks structure. Without structure, an idea cannot become software that solves real problems. The bridge between a fuzzy concept and a working feature is the testable user story.

Many teams struggle here. They write requirements that are open to interpretation. Developers build one way, testers test another, and the product owner feels the result missed the mark. This misalignment costs time, money, and morale. The solution lies in precision. By transforming vague ideas into testable user stories, teams gain clarity, predictability, and quality.

This guide explores how to refine raw concepts into actionable items. We will look at the anatomy of a strong story, the role of acceptance criteria, and the importance of collaboration. There are no magic tools here, just proven practices for better delivery.

What Is a Testable User Story? 🧐

A user story is not just a ticket in a tracking system. It is a commitment to a conversation. It describes a capability from the perspective of an end user. However, a story is only valuable if it can be verified. If you cannot write a test case for it, it is not ready.

Testability means that the behavior can be observed and measured. It removes ambiguity. When a story is testable, everyone knows what done looks like before work begins. This shifts the focus from output to outcome.

- Role: Who is asking for this feature?

- Goal: What do they want to achieve?

- Benefit: Why does it matter to the business or user?

Without these elements, a story is just a task. A task is an instruction. A story is a value proposition. The goal is to ensure every story delivers value that can be validated.

The Cost of Vagueness 📉

When requirements are vague, the team pays a price. This price is not just in currency; it is in cognitive load and time. Understanding the consequences helps motivate the shift toward precision.

1. Rework and Waste

If a developer assumes a feature should work one way, but the product owner intended another, the code must be rewritten. This is waste. It consumes resources that could have been used for new features. Vagueness leads to assumptions, and assumptions lead to errors.

2. Testing Gaps

Testers cannot create a robust test suite if the requirements are unclear. They will guess. If they guess wrong, bugs slip into production. Later, fixing bugs is more expensive than writing code correctly the first time. Clear stories provide the script for testing.

3. Team Friction

Disagreements arise when expectations differ. Developers blame product owners for unclear specs. Product owners blame developers for missing the vision. A testable story acts as a shared contract. It aligns the team around a single definition of success.

The INVEST Model for Quality 🏗️

To ensure stories are testable, they must meet specific quality criteria. The INVEST model provides a checklist. Each letter represents a characteristic of a good story.

| Letter | Meaning | Why It Matters |

|---|---|---|

| I | Independent | Stories should not rely on others to be delivered. |

| N | Negotiable | Details are discussed, not fixed in stone. |

| V | Valuable | It must deliver value to the user or business. |

| E | Estimable | The team must be able to size the effort. |

| S | Small | Large stories are hard to test and manage. |

| T | Testable | Acceptance criteria must be verifiable. |

Focus heavily on Small and Testable. Large stories hide complexity. They are often too big to test in a single iteration. Breaking them down reduces risk. If a story is too big, split it. Split by function, by user type, or by data volume.

Writing Acceptance Criteria 📝

Acceptance criteria are the guardrails of a user story. They define the boundaries of the feature. They answer the question: What conditions must be met for this story to be accepted?

There are several ways to write them. The most common method uses scenarios. This approach describes the behavior in a specific context. It avoids abstract language.

Bad vs. Good Examples

Compare the following examples to see the difference between vague and testable language.

| Feature | Vague (Avoid) | Testable (Use) |

|---|---|---|

| Search | Search should be fast. | Search results appear in under 2 seconds for 100 items. |

| Login | User can log in. | User enters valid credentials and clicks Submit; dashboard loads. Invalid credentials show an error message. |

| Export | Export data to PDF. | System generates a PDF file containing the current table view. File downloads automatically upon request. |

Notice the difference in the Testable column. It includes specific conditions, expected results, and measurable metrics. The word fast is subjective. 2 seconds is objective.

Types of Acceptance Criteria

Different stories require different types of criteria. Do not force one format onto every item.

- Business Rules: Specific logic or calculations. (e.g., Tax is 10% for orders over $50).

- UI Behavior: How the interface reacts. (e.g., Button turns green on success).

- Performance: Speed or load limits. (e.g., Page loads in 1 second).

- Error Handling: What happens when things go wrong. (e.g., Display error code 404).

- Security: Access control requirements. (e.g., Only admin can delete records).

The Gherkin Syntax Structure 📋

For complex logic, a structured format helps. Gherkin is a language-agnostic way to describe behavior. It uses plain text to define scenarios. This makes it readable for non-technical stakeholders.

The structure follows three main keywords:

- Given: The initial context or state.

- When: The action or event that occurs.

- Then: The expected outcome or result.

This structure forces the writer to think about the flow. It prevents missing steps. It also aligns with automated testing frameworks.

Example Scenario

Imagine a story about resetting a password. Here is how it might look in Gherkin format:

Feature: Password Reset Scenario: User requests a password reset Given the user is on the login page When the user clicks the "Forgot Password" link Then the system sends a reset email to their registered address Scenario: User enters an invalid email Given the user is on the login page When the user clicks the "Forgot Password" link And enters an email that does not exist Then the system displays a generic success message

This format removes ambiguity. It states exactly what input leads to what output. It serves as documentation and test cases simultaneously.

Collaboration is Key 🤝

Writing a story in isolation often leads to gaps. The best stories come from collaboration. This involves bringing the product owner, developers, and testers together.

The Three Amigos

This informal term refers to the trio of roles involved in refining a story. They meet before development starts.

- Product Owner: Defines the value and the business rules.

- Developer: Identifies technical constraints and implementation details.

- Tester: Identifies edge cases and potential failure points.

During this session, they review the draft story. They ask questions. They challenge assumptions. They refine the acceptance criteria together. This process is often called backlog refinement or story grooming.

Questions to Ask

During refinement, ask these questions to uncover hidden complexity:

- What happens if the network fails during this action?

- How does this feature behave on a mobile device?

- Are there any data privacy regulations to consider?

- What is the fallback if the external service is unavailable?

- Does this change affect existing data or reports?

Answering these questions early prevents surprises later. It builds shared understanding.

Common Pitfalls to Avoid 🕳️

Even experienced teams make mistakes. Awareness of common traps helps you steer clear of them.

1. The Solution Statement

Do not write stories that describe the solution. A story should describe the problem or the need. The team decides the solution during development.

Bad: “Add a button to export to Excel.” Good: “As a manager, I need to export my data so I can analyze it offline.”

2. Technical Tasks as Stories

Refactoring or infrastructure work is not a user story. It is technical debt or maintenance. While important, it does not deliver direct user value in the same way. Track these separately.

3. Ignoring Non-Functional Requirements

Performance, security, and accessibility are not optional. They must be included in the acceptance criteria. Do not assume the system is secure by default.

4. Too Many Acceptance Criteria

If a story has 50 acceptance criteria, it is likely too large. Split the story. Focus on the core value first. Add complexity in iterations.

Measuring Quality 📏

How do you know your stories are getting better? You need metrics. Track these indicators over time.

- Defect Rate: Are bugs found in testing decreasing? If acceptance criteria are clear, fewer bugs slip through.

- Rejection Rate: How often is a story returned during review? High rejection suggests unclear criteria.

- Velocity Consistency: Does the team estimate accurately? Clear stories lead to better estimates.

- Automation Coverage: Can you automate the acceptance criteria? High coverage indicates testable stories.

Review these metrics in retrospectives. Discuss what worked and what did not. Adjust your process accordingly. Continuous improvement is the goal.

Real-World Scenarios 🌍

Let us look at how this applies in different contexts. The principles remain the same, but the details change.

Scenario A: E-Commerce Checkout

This is a critical flow. Errors are costly. Stories must cover every step.

- Story: Apply Discount Code.

- Criteria:

- System validates code format.

- System checks code expiration date.

- System calculates new total price.

- System displays error if code is invalid.

- System prevents reuse of expired codes.

Scenario B: Reporting Dashboard

Data accuracy is paramount here.

- Story: Filter Reports by Date Range.

- Criteria:

- System defaults to last 30 days.

- System allows custom start and end dates.

- System excludes data outside the selected range.

- System handles weekends and holidays correctly.

Scenario C: User Profile Management

Security and data integrity are key.

- Story: Update Profile Picture.

- Criteria:

- System accepts JPG and PNG formats.

- System limits file size to 5MB.

- System displays thumbnail in grid view.

- System removes old images from storage.

The Definition of Done 🛑

Acceptance criteria define the specific story. The Definition of Done applies to all stories in the project. It is the checklist for quality that is always active.

A story is not done until:

- Code is written.

- Code is reviewed.

- Tests are passing.

- Documentation is updated.

- Performance standards are met.

- Security scan is clear.

This ensures consistency. It prevents technical debt from accumulating. It guarantees that every story delivered is usable.

Iterative Refinement 🔄

Stories are not static. They evolve. As you learn more about the system, you may need to update them. This is not a failure; it is part of the process.

Keep the backlog ready. Refine stories regularly. Do not wait until the sprint starts to ask questions. The best time to clarify is early. The cost of change grows exponentially the closer you get to code.

Summary of Best Practices ✅

To wrap up the journey from vague to testable, remember these core points.

- Focus on Value: Always link back to the user need.

- Be Specific: Use numbers and clear conditions.

- Collaborate: Involve all roles in refinement.

- Verify: Ensure every story can be tested.

- Iterate: Improve stories based on feedback.

Adopting this mindset changes how a team operates. It builds trust. It improves speed. It results in software that actually works for the people who use it.