In the landscape of modern software development, the user story stands as the fundamental unit of value delivery. It is more than a task description; it is a promise of functionality, a vehicle for communication, and a contract between the development team and the stakeholders. When executed effectively, a story drives clarity, reduces waste, and accelerates delivery. However, when crafted poorly, stories become sources of ambiguity, rework, and friction. This article dissects the anatomy of a high-performing agile story, exploring the structural elements, refinement techniques, and quality standards required to ensure successful outcomes.

Why Stories Fail: The Cost of Ambiguity 🛑

Before diving into the construction of a perfect story, it is necessary to understand why stories often underperform. Ambiguity is the primary enemy of execution. When a story lacks specificity, developers must make assumptions. Assumptions are not facts. Every assumption carries a risk of error. If a developer assumes a specific business logic based on a vague description, the resulting feature may not meet the actual user need. This leads to:

Rework: Building something that needs to be torn down later.

Delays: Time spent clarifying requirements during development.

Technical Debt: Implementing quick fixes to meet unclear expectations.

Team Frustration: Developers feel undervalued when their work is constantly questioned.

A high-performing story eliminates these risks by providing a clear, testable, and agreed-upon scope before work begins. It shifts the conversation from “What should we build?” to “How do we build this efficiently?”

The Three Cs: The Foundation of a User Story 🃏

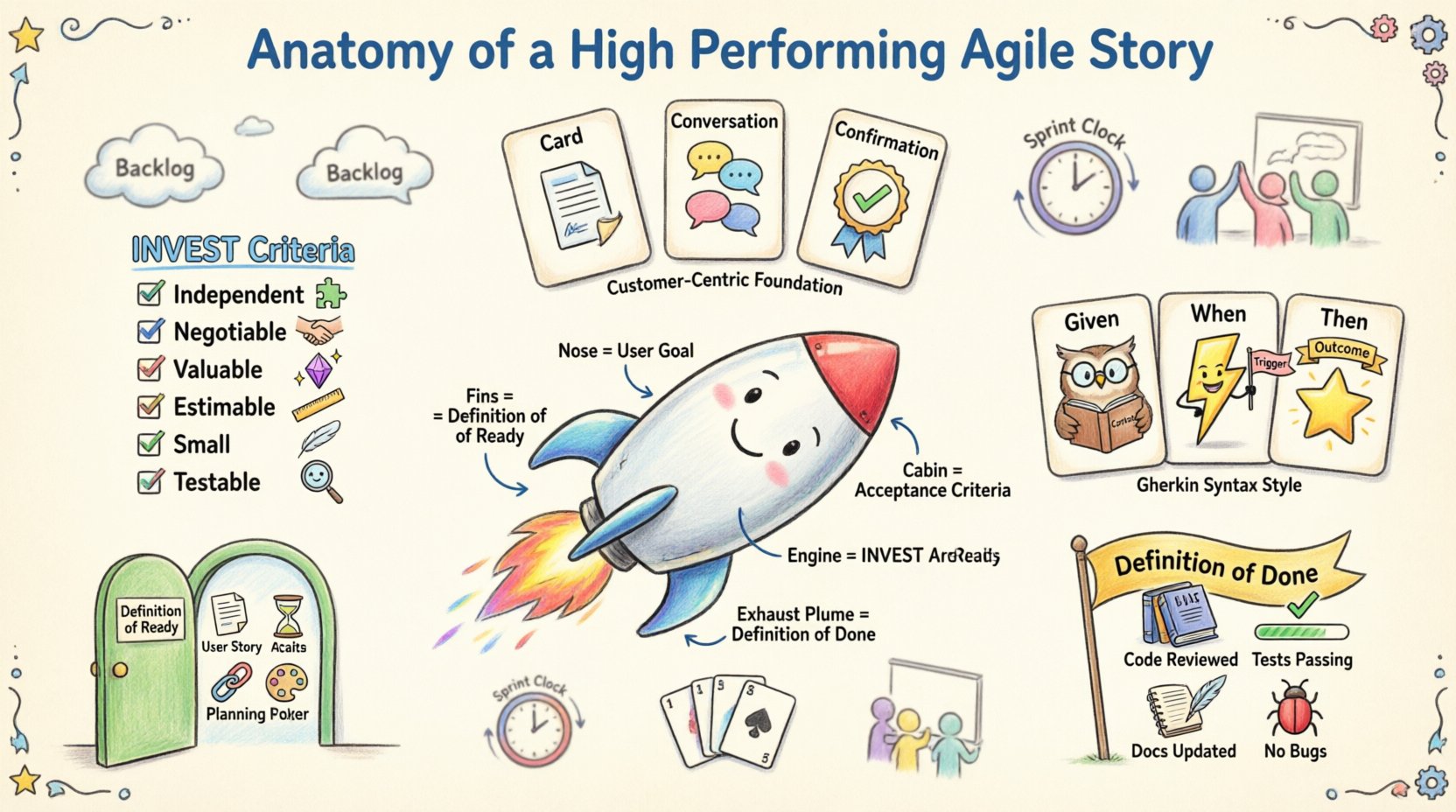

Agile methodology relies on a simple framework known as the Three Cs. This model ensures that stories remain flexible, conversational, and valuable.

Card: The written record of the story. It captures the essence of the requirement in a concise format.

Conversation: The dialogue between the product owner, developers, and testers. This is where the details are fleshed out.

Confirmation: The acceptance criteria that define success. These are the tests that verify the story is complete.

Ignoring any of these three components weakens the story. A card without conversation is a specification document that cannot change. A conversation without confirmation leaves no definition of completion. A confirmation without a card lacks context.

Structuring the Card: The INVEST Criteria 📝

To ensure a story is actionable and valuable, it should adhere to the INVEST model. This acronym serves as a checklist for story quality. Every high-performing story should be:

1. Independent (I)

Stories should be as self-contained as possible. Dependencies on other stories create bottlenecks. If Story A cannot be completed without Story B, the team loses the ability to prioritize and deliver value in isolation. While some dependencies are inevitable, the goal is to minimize them.

2. Negotiable (N)

A story is not a contract; it is an invitation to discuss. The details of the implementation should be open to negotiation between the team and the product owner. This flexibility allows developers to suggest technical improvements or suggest alternative solutions that achieve the same value with less effort.

3. Valuable (V)

Every story must deliver value to the user or the business. If a story does not contribute to a measurable outcome or user need, it should be questioned. Value is the primary filter for backlog prioritization.

4. Estimable (E)

The team must be able to estimate the effort required. If a story is too vague to estimate, it is not ready for the sprint. Estimation requires understanding the scope, complexity, and risks involved.

5. Small (S)

Stories should be small enough to be completed within a single iteration. Large stories are difficult to estimate and risky to deliver. Breaking a large story into smaller stories reduces risk and increases feedback frequency.

6. Testable (T)

This is the most critical aspect for quality. A story must have clear criteria for testing. If you cannot write a test case for it, you cannot verify if it is done. Testability ensures objectivity in the Definition of Done.

Acceptance Criteria: The Contract of Completion ✅

The Acceptance Criteria (AC) define the boundaries of the story. They are the specific conditions that must be met for the story to be accepted. AC is not the same as the user story description. The story describes the “what” and “who”. The AC describes the “how” and “when”.

Characteristics of Strong Acceptance Criteria

Clear and Concise: Avoid technical jargon that stakeholders cannot understand.

Specific: Use numbers and explicit conditions. Avoid words like “fast” or “secure” without defining metrics.

Atomic: Each criterion should test a single behavior.

Independent: Criteria should not depend on each other.

The Gherkin Syntax

Many teams use the Gherkin syntax (Given/When/Then) to structure acceptance criteria. This format promotes a shared understanding between business and technical teams.

Keyword | Purpose | Example |

|---|---|---|

Given | Establishes the initial context or state. | Given the user is logged in… |

When | Describes the action or event. | When the user clicks the logout button… |

Then | Defines the expected outcome. | Then the user is redirected to the login screen… |

Edge Cases and Non-Functional Requirements

High-performing stories also account for edge cases and non-functional requirements (NFRs). NFRs include performance, security, and reliability. These should be explicitly stated in the acceptance criteria or as a sub-story.

Performance: “The page must load in under 2 seconds.”

Security: “User data must be encrypted at rest.”

Accessibility: “The form must be navigable via keyboard only.”

Definition of Ready (DoR) and Definition of Done (DoD) 🚦

Two critical concepts govern the lifecycle of a story: Definition of Ready and Definition of Done. These are team-specific agreements that ensure quality and flow.

Definition of Ready (DoR)

The DoR is a checklist that must be satisfied before a story enters a sprint. It ensures the team does not start work on incomplete or unclear items. A typical DoR includes:

Story is written in user story format.

Acceptance criteria are defined and agreed upon.

Estimation is complete.

Dependencies are identified.

Design assets are available.

Definition of Done (DoD)

The DoD is the checklist that must be satisfied for a story to be considered complete. It ensures that the work is not just “finished” but “shippable”. A typical DoD includes:

Code is written and reviewed.

Unit tests are written and passing.

Integration tests are passing.

Documentation is updated.

Performance requirements are met.

No critical bugs remain.

Without a DoD, a story can be marked complete while still containing bugs or technical debt. Without a DoR, the team starts work with uncertainty.

The Refinement Process: Shaping the Backlog 🛠️

Stories do not appear fully formed. They require refinement, also known as backlog grooming. This is a continuous process where the team reviews upcoming stories to ensure they are ready for future sprints.

Key Activities in Refinement

Clarification: Discussing the details with the product owner to resolve ambiguities.

Decomposition: Breaking large stories into smaller, manageable tasks.

Estimation: Using techniques like Planning Poker to assign effort estimates.

Prioritization: Ensuring the most valuable stories are at the top of the backlog.

Risk Analysis: Identifying potential technical or business risks early.

Refinement should happen regularly, not just before a sprint planning session. This ensures the team is always prepared and avoids the rush of last-minute clarification.

Estimation Techniques: Predicting Effort 📊

Accurate estimation is crucial for sprint planning. However, estimation is not about predicting the future; it is about comparing relative complexity. Teams should avoid using hours as the primary unit of measure. Instead, use story points.

Story Points vs. Hours

Hours: Focuses on time. People work at different speeds. Time does not account for complexity or risk.

Story Points: Focuses on effort, complexity, and risk. It is relative. A story of 5 points is roughly twice as complex as a story of 2 points.

Planning Poker

Planning Poker is a consensus-based technique. Each team member selects a card representing their estimate. When cards are revealed, discrepancies are discussed. This encourages open dialogue about risks and assumptions. The goal is not to guess perfectly, but to align understanding.

Common Pitfalls to Avoid 🚫

Even experienced teams fall into traps when managing user stories. Recognizing these pitfalls helps maintain high performance.

1. The Ticket is the Story

Some teams treat the Jira ticket as the story itself. They forget the conversation. The ticket is just the record. The real story exists in the discussions, the designs, and the shared understanding.

2. Ignoring Technical Stories

Not every story is a user feature. Technical stories (spikes, refactoring, infrastructure) are essential for long-term health. They must be included in the backlog and prioritized.

3. Over-Engineering Acceptance Criteria

While AC is vital, writing a novel for every story slows down development. Keep AC focused on the happy path and critical edge cases. Avoid unnecessary detail that changes frequently.

4. Neglecting the DoD

Skipping the Definition of Done leads to a “technical debt spiral.” Work accumulates, bugs increase, and velocity drops. Enforce the DoD strictly.

5. Varying Story Sizes

A sprint should ideally contain stories of similar size. If one story is an 8 and another is a 2, the variance creates instability. Aim for stories that fit within the team’s capacity.

Measuring Story Health 📈

To continuously improve, teams must measure the quality of their stories. Key metrics include:

Defect Rate: How many bugs are found after a story is marked done? High rates indicate weak acceptance criteria or DoD.

Reopen Rate: How many stories are reopened after closure? This suggests incomplete testing.

Refinement Duration: How long does it take to refine a story? Long durations suggest the team is struggling to understand requirements.

Velocity Stability: Does the team deliver consistent value? Volatile velocity often points to unstable story sizing.

The Human Element: Collaboration and Empathy 🤝

Technical standards are useless without human collaboration. A high-performing story relies on trust. The product owner must trust the team to deliver quality. The team must trust the product owner to provide clear direction. Empathy plays a role here. Developers need to understand the user’s pain point. Product owners need to understand the developer’s constraints.

When stories are treated as collaborative artifacts rather than assignments, engagement increases. Team members take ownership. They ask better questions. They propose better solutions. This culture of ownership is the true secret behind high-performing stories.

Iterative Improvement 🔄

Agile is about adaptation. Stories are not static documents. They evolve as the team learns. If a story is too large, split it. If a story is unclear, refine it. If a story is low value, deprioritize it. The process is never finished. Continuous improvement of the story format is as important as the delivery of the feature.

Regular retrospectives should include a review of the backlog. Discuss which stories caused confusion. Discuss which stories were easy to estimate. Use this feedback to adjust the DoR and refinement practices.

Summary of Best Practices 🏆

To summarize, building high-performing agile stories requires discipline, clarity, and collaboration. Here are the core takeaways:

Follow the 3 Cs: Card, Conversation, Confirmation.

Apply the INVEST criteria to every story.

Define clear Acceptance Criteria using Gherkin or similar logic.

Enforce Definition of Ready and Definition of Done.

Refine the backlog continuously, not just before sprints.

Use relative estimation (Story Points) over hours.

Measure quality through defect rates and reopen rates.

Foster a culture of collaboration and shared ownership.

By adhering to these principles, teams can transform their user stories from administrative overhead into powerful engines of value creation. The goal is not just to write stories, but to write stories that work.